In today’s article, I’m going to discuss ‘Latest Google updates’. Besides that, I’ll also show you how to minimise the impact of new Google updates on your rankings.

Google modifies its system continues to keep the SERPs quality high.

As you know, Google web directory is such a big directory, and it’s almost impossible to handle the data manually. That’s why Google build automatic systems called ‘Google algorithms’. These Algorithms are purely developed to complete the assigned task.

Whenever Google identifies any suspicious activity that dilutes the quality of SERPs, Google updates their system to let the spammers know that this strategy is no longer working anymore.

AND that’s the idea behind the Google updates.

In fact, According to MOZ, Google revises the algorithms around 500 to 600 times a year.

It clearly shows that Google monitors the SERPs data continuously to penalise the low-quality content.

That’s the reason whenever Google updates its system; you find a huge variation in traffic.

Recently, I’ve found a drop in traffic because of the very latest Google update called ‘Maccabees’. The primary focus of Maccabees was to remove the bad links, irrelevant & thin content. (I will discuss it in a minute, so shit tight).

And why my site got affected; it is because of irrelevancy. Some articles on my blog have no relevancy to my existing pieces.

Now, let’s end up the current discussion and start to learn what Google algorithms are? And what are the big latest Google updates that influence Search results?

What is the Google Algorithm?

Algorithms are the set of rules that are developed to perform some specific tasks. It might be collecting data, analysing the content or arranging the content.

Whenever you publish an article, Google spiders first scan your article to know what your content is all about.

Scanning is also part of the Algorithm. Google spiders look for relevant phrases. Keywords, LSI words, visuals, inbound and outbound linking to measure the page quality.

There are a ton of parameters that Google considers to measure the quality of a page. That’s the reason Google developed the automatic system to improve the performance.

Big Latest Google updates: –

- Maccabees (December 2017)

- RankBrain (Early 2015)

- Hummingbird (September 2013)

- Pigeon (July 2014)

- Penguin (April 2012)

- Panda (February 2011)

1. Maccabees: New update in Google

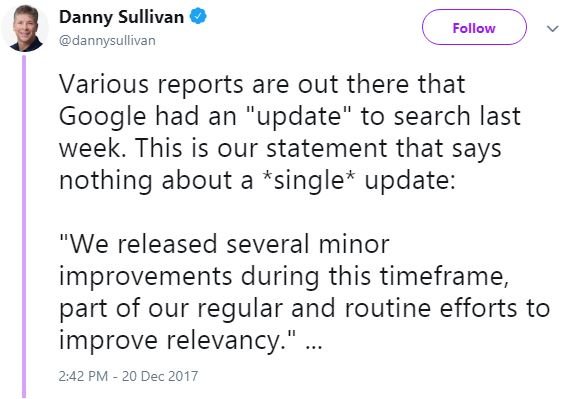

As I said, Google modifies its system regularly, so there is always new things to know. Last December 2017; a new update was rolled out called ‘Maccabees’.

Google has not confirmed this update yet. But the rumours are still there. Bloggers, SEO experts and digital marketers are putting their views on it.

Many say that the latest Google update targeting the mobile SERPs, doorway pages and site that don’t have schema markup data.

And some expert says that the primary focus of it is to ‘penalise the low-quality content’ and also look for keyword permutations.

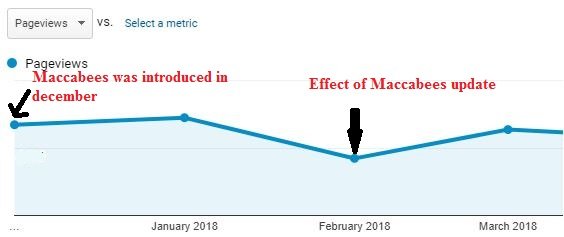

I don’t know what people are saying, but I want to give my opinion and also want to tell you what happened to me after the update was launched.

In December, everything was normal. But in January 2018 my traffic was increased by 8 to 9%.

But in February 2018, I again lost my traffic by 42%. And it makes me curious to know why my traffic was lost. And it was the impact of Maccabees update.

Let’s see what the possible reasons are: –

- It could be because of irrelevant content. Almost 25% of the posts on my blog are unrelated to rest (75%) of the posts. So, I deleted all irrelevant posts.

- I realised that this 25 % of posts are very weak and have low-quality

- My overall link profile was not that good

- I lost 50 keywords from the first page of Google.

So it was the report of my site after these updates. Let’s conclude what could be the possible signs that encourage Maccabees to arrest your site.

- Irrelevant content

- Low-quality content

- Doorway pages: Generally, it is a bad SEO practice where webmasters create low-quality content to rank for a particular keyword that adds no value to visitors.

- Keyword permutation: (It means different articles that have a very similar keyword. For example, you have a post that is already ranked for a keyword say ‘SEO strategies’, now if you publish another post that is optimised for very similar phrase let’s say ‘SEO strategies for blogger’; it doesn’t make sense… It means you don’t need to create different -2 landing pages for the permutation of a keyword)

Check this out to know what other bloggers or SEOs are experiencing about Maccabees updates.

2. RANkBRAIN

Late 2015, Google introduced an artificial intelligence algorithm called RankBrain. The primary focus of RankBrain is to deliver relevant search results to the user faster than ever before.

In fact, Google has also confirmed that it is the third-ranking single behind high-quality content and backlinks.

It is a technology that powers Google’s machine system to learn by itself.

The RankBrain uses the vector entities to predict the relevant phrases or words and deliver the best-matched results accordingly.

It has improved the SERPs accuracy by 10% which is a considerable achievement.

The primary focus of it to provide the relevant results to the user immediately. It modifies the search results by its recommendation. Rankbrain is not just matching the keywords. Instead, it understands the intent of that keyword.

Now, let’s understand how does RankBrain work?

It considers two things to filter the search queries

- Try to understand the meaning of keyword that you have typed in, instead of looking for a perfect keyword match.

- User behaviour.

There are some unknown collection of keywords that Google has never sorted before. In fact, everyday about 15% of the keywords are unknown to Google.

In such a case, Google can’t find the exact content because of UNKNOWN phrase. But Rankbain has made it simple.

Before Google’s spiders go through the directory, RankBrain converts those terms into some meaningful queries that nearly satisfied the searcher intent and available in the catalogue.

These modified queries are further used to filter the search results, and these results are then sent to the user.

So, here you’ve come to know how RankBrain interprets a raw keyword and understand the exact meaning of it.

Now let’s talk about hummingbird algorithm…

3. HUMMINGBIRD UPDATE

It is considered as one of the most prominent Google algorithms that have changed the entire SERPs pattern.

In fact, Matt cuts stated that it would impact 90% of all search results.

A year before the launching of Hummingbird; Google introduced some knowledge graphs to help people give quick results whenever they search for places, people or things.

Back in 2013, Google was unable to measure the exact meaning of KEYWORDS and PHRASES. Resulting, poor SERP quality.

But after the release of Hummingbird, it has powered Google to understand search query more quickly.

Google is now able to understand the natural processing language to give the user more precise and fast results.

Ok, it was all about Hummingbird. Next, you’re going to see the features of this update…

- It powers Google to deal with longer queries.

- Now Google can understand the context of search queries

- Local search results are different for the individual based on geolocation and old existed data stored in Google my activity account.

3. PIGEON

Local SEO is also one of the most critical SEO elements, especially for online business. And it’s evident because people search for local business through Google to get some initial information before they visit any shop, mall or restaurant.

But what Google found later is that there are less relevant results when people make local queries.

That’s why Google introduced a new update called ‘’pigeon” to improve the local search results. And since 2014 Google has released a series of pigeon updates.

Impact of pigeon update on SERPs:

- It improves local search results by providing users with a knowledge graph, distance Map, spelling checker and more other information related to local business.

- Google keeps secrets, but SEOs did studies and found that some local businesses are losing and some are gaining the rankings locally.

- Google uses 100 ranking signals to understand the local search results better.

- Local search results are different before and after pigeon update

- It adds local signals to provide the value to other local directories like Yelp & HomeAdvisor.

- Ten/ seven local business pack are now shrunk to three local pack.

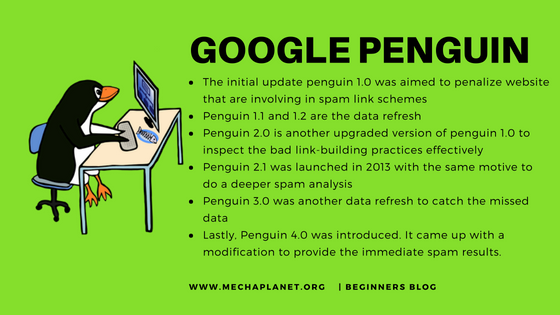

4. PENGUIN

It was developed by Google to penalise sites that are involving bad link building and keyword stuffing process.

Initially, it was introduced as a separate tool but in 2016 Google confirmed that Penguin is now the core part of the algorithm.

The latest Google update is penguin 4.0 which is released on September 2016. The initial update was introduced in 2012 and since then Google updating penguin to make it more powerful to track the spam links and keyword stuffing effectively.

This new version brings an additional feature that enables bloggers or webmasters to see the immediate improvement of their ranking after removal of spam backlinks. It means they don’t have to wait for the upcoming update to see the effects.

5. PANDA

It is a very initial Google update which still influences the sites that are publishing low-quality content.

It impacted around 12% of Google search results.

Similar to the penguin, Panda also has a series of updates to improve the algorithm so that it could work wisely.

Your site may be penalised because of the following reasons: –

- If you are Publishing thin content

- The technically weak site may lose their rankings. [DNS or server error]

- Content spinning

- Concise article

- Spelling mistakes

- Page break

- Broken internal or external links

- Poor user experience

So it was all about the latest Google updates. Now you’re going to learn how to avoid the effect of these updates…

How To Minimize The Impact of Algorithms on Your Rankings

Google has a sophisticated algorithm that helps it to filter all additional and spam results from the directory.

It not only improves the SERPs quality but also increases the user search experience.

But at the same time, it is becoming tough to reserve the first position on Google because of its complexity.

Have you ever imagine why do you lose your traffic?

The simple answer is because you cheat the Google system. It doesn’t matter what you have done, but the thing is that if you try to game Google’s system, Google will penalise your efforts.

So better you obey search engine rules to keep your site’s traffic stable.

So, how to improve Google search ranking without participating in lousy SEO practices…

The short answer is ‘Never disobey the Google rules.’

Here I’m going to list out some best SEO practices to minimise the chances of getting penalised by any of these algorithms.

- Make sure you read Google’s SEO starter guide to understand the boundary lines specified by Google.

- Never cross their boundary lines.

- Never do keyword stuffing.

- Try to keep your article unique and fresh.

- Don’t use content spinner tools to generate content.

- Keep your link profile clean.

- Disavow toxic backlinks.

- Improve your site performance.

- If you want to avoid or minimise the panda penalty, then make sure you always create unique and high-quality content. Never use any content spinning software.

- Don’t over optimise your content.

- If you want to keep your site safe from penguin penalty, then you must improve your link quality and keyword optimisation

- Grow your local businesses by creating your business profile on local directories like Yelp & HomeAdvisor including Google my business.

- Create content that appealing to users because Google can understand the natural processing language.

- Don’t rely on keyword density. Now you can rank your page without including exact keywords.

- Improve your site loading speed.

- Don’t follow the concept of Keyword permutation to create multiple contents targeting similar keywords. Instead, write a single long article that includes all related terms.

- Don’t keep on adding new categories to your site because it dilutes your topic authority and at the same time it reduces the content relevancy.

Conclusion:

The best way to minimise the Google-penalties is to know Google updates very well. I mean why Google brings these changes, and how you can improve Google search rankings without infringing policies.

There could be one more question that you might want to ask is that…

Question: –

However, I’m creating high-quality content, high authority backlinks and also optimizing my content for keywords, then why Google isn’t ranking me on top?

The first thing is that you’re not alone. I mean there are thousands of articles available in Google directory on the same topic. So you are not the only one.

And secondly, many times people share or submit your website link on toxic forums and this is purely out of your control. So you need to keep checking your website’s link profile to ensure that your site is safe. If you find bad links, then consider removing them using Google’s disavow tool.

It may also happen that you are targeting hard keywords. For example, if you try to rank for a keyword like ‘SEO’ then you are wasting your time and efforts because some top brands already have reserved the top positions. And here is no room for your site. So better you target low competitive long-tail keywords.

If you like this article then share it with others on social media platforms.

A few popular guides that you might want to read next: –

- 35+ ways to increase domain authority

- 6 smart ways to increase domain authority fast

- YouTube SEO guide to increasing traffic on your channel